The AI can review its own work. That's the argument. And technically, it's not wrong - you can absolutely ask an LLM to audit the code it just generated. But here's what that argument quietly ignores: a reviewer is only as good as the standards they're held to. If you don't know what to demand, you'll accept whatever comes back. And what comes back is often a confidently presented, syntactically clean, functionally dangerous mess.

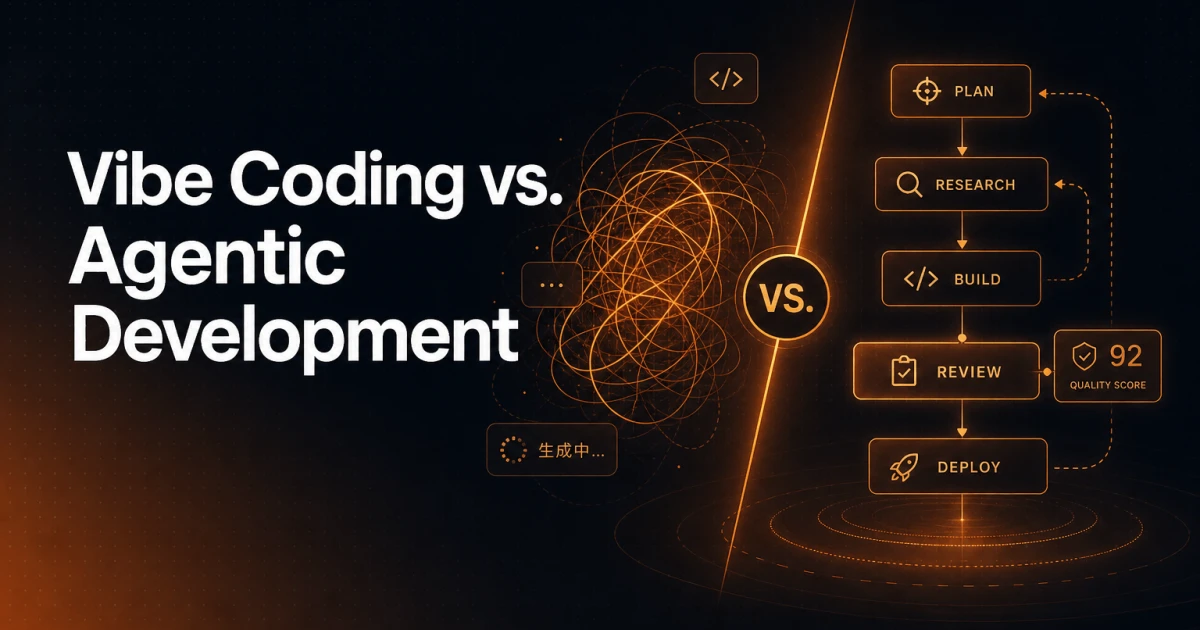

This is the core tension between vibe coding and agentic development - and the difference matters far more than most people in the "just ship it with AI" crowd are willing to admit.

What Vibe Coding Actually Is

Vibe coding isn't a formal methodology. It's a pattern: a non-developer (or a developer who has stopped thinking critically) uses an AI assistant to generate entire features, modules, or applications by describing what they want in plain language. The output looks like code. It runs. It might even pass a quick manual test. So it ships.

The "vibe" part is accurate in the worst possible way - the whole process runs on feel. Does it seem to work? Does the AI say it's good? Great, we're done.

Agentic development is something different. It uses AI as a force multiplier inside a structured workflow - where a developer with real domain knowledge directs agents, validates outputs, sets constraints, and catches what the model gets wrong. The AI does more of the heavy lifting, but the human brings the judgment that the model fundamentally cannot.

These are not two points on the same spectrum. They are categorically different approaches with categorically different risk profiles.

The Self-Review Illusion

Ask an LLM to write a login form, then ask it to review the same code for security issues. In many cases, it will find nothing wrong - or surface minor, cosmetic concerns while missing the actual vulnerabilities. This isn't a bug in the model. It's a structural limitation.

AI models are trained to be helpful and coherent. They optimize for plausible, confident responses. When asked to critique their own output, they're not running an independent audit - they're pattern-matching against what a security review is supposed to sound like. If you don't know enough to push back on what they miss, you'll walk away thinking the code is clean.

A vibe coder asking "is this secure?" will almost always get "yes, though you may want to consider adding rate limiting" - and they'll nod and move on. A developer who knows what XSS, CSRF, and injection attacks look like will ask pointed, specific questions and recognize when the answer is evasive or incomplete.

The self-review isn't worthless. But it's only useful when the person reading the review has enough context to know what's missing.

The Vulnerabilities That Slip Through

XSS - Cross-Site Scripting

Cross-site scripting happens when user-supplied input gets rendered in the browser without proper escaping. An attacker injects a script tag into a comment field, a search query, or a form input - and that script runs in the context of your site, with access to cookies, session tokens, and everything else. AI-generated code that outputs user data to the DOM without sanitization is not a hypothetical risk. It's a default pattern.

A vibe coder sees a comment section that displays comments. It works. The AI said it reviewed it. What they don't see is that every comment field is a potential injection point, and none of the output is being escaped. A developer recognizes this immediately and knows exactly what to look for - innerHTML vs textContent, unescaped template variables, missing Content Security Policy headers.

Input Sanitization - The Gatekeeper Nobody Thinks About

Every piece of data that enters your application from the outside world is hostile until proven otherwise. Forms, API payloads, URL parameters, file uploads - all of it needs validation and sanitization before it touches your database, your filesystem, or your rendering layer.

AI-generated code frequently skips this or handles it superficially. It might add a required attribute to an HTML input field and call that "validation." It might trim whitespace and check for empty strings. What it won't do, unless explicitly instructed by someone who knows to ask, is validate data types, enforce length limits, strip dangerous characters, or prevent SQL injection through parameterized queries.

The vibe coder's app accepts any input. It probably works fine in testing, because the tester isn't trying to break it. The first person who does will have a very productive afternoon.

N+1 Queries - Performance Death by a Thousand Cuts

The N+1 query problem is elegant in how invisible it is. You load a list of 50 blog posts. For each post, your code makes a separate database query to fetch the author's name. That's 51 queries instead of 1 or 2. At 50 posts it's sluggish. At 500 posts it's a timeout. At scale, it's a database on its knees.

AI models generate code that works at the unit level. They write a loop, they fetch data inside the loop, the output is correct. The fact that this pattern is catastrophically inefficient at any real load is not something the model will flag unless you know to ask - and ask specifically, with enough context to evaluate the answer.

A developer reviewing AI-generated ORM code looks for eager loading, checks query counts, and understands the difference between with() and load() in Laravel, or select_related() vs prefetch_related() in Django. A vibe coder sees data on the screen and moves on.

Why the AI Doesn't Save You From Yourself

The uncomfortable truth about AI coding assistants is that they reflect your ability to direct them. They are not autonomous quality gatekeepers. They are extremely capable tools that produce output calibrated to what you ask for and how you ask for it.

A senior developer using an AI agent gets dramatically better output than a non-developer using the same tool - not because the AI behaves differently, but because the developer:

Knows what constraints to impose upfront - specifying security requirements, performance expectations, and architectural patterns before a single line is generated, rather than hoping the model assumes them.

Recognizes bad output when they see it - even clean, well-formatted code can be structurally wrong, and you need domain knowledge to catch the difference between code that runs and code that holds up.

Asks the right follow-up questions - not "is this secure?" but "does this output escape user input before rendering?" Not "is this efficient?" but "how many queries does this generate for a result set of 1,000 records?"

Understands the blast radius of mistakes - knowing that a missed sanitization step isn't just a bug but a potential data breach changes how carefully you verify the output.

The AI doesn't have skin in the game. You do. And if you don't know what you're looking at, the model's confidence becomes your liability.

Agentic Development Is Not "More Vibe Coding With Extra Steps"

There's a tendency to frame agentic development as vibe coding with better tooling - longer context windows, multi-step workflows, automated testing hooks. That framing misses the point entirely.

Agentic development is a discipline. It means defining clear task boundaries for AI agents, writing precise prompts that encode your actual requirements (including security and performance constraints), validating outputs against known-good criteria, and maintaining a mental model of what the system is supposed to do at every layer.

The "agentic" part refers to the AI's ability to take multi-step actions autonomously. But autonomy without oversight is just automation of errors at scale. The developer's role doesn't shrink in agentic workflows - it shifts. You spend less time writing boilerplate and more time defining constraints, reviewing outputs critically, and making architectural decisions that the model is not equipped to make.

If you hand an agent a vague task and trust it to handle the details, you haven't adopted agentic development. You've just automated your vibe coding.

The Real Cost of Shipping AI Slop

Applications built without foundational development knowledge don't fail dramatically. They fail slowly, in ways that are hard to trace and expensive to fix. A security vulnerability sits dormant until it's exploited. An N+1 query performs fine until traffic grows. A missing validation rule causes no problems until someone submits the one input you didn't anticipate.

By the time these issues surface, the original "developer" has often moved on, the codebase is a tangle of AI-generated patterns with no coherent architecture, and the cost of remediation dwarfs what proper development would have cost from the start.

The vibe coder's app didn't just ship with bugs. It shipped with structural debt that compounds every time someone adds a feature using the same approach - because the patterns are wrong, and nobody on the team knows enough to know they're wrong.

What Separates a Tool User From a Developer

AI has genuinely changed what's possible for a single developer. Tasks that used to require a team can now be executed by one person with strong domain knowledge and good AI tooling. That's real, and it's significant.

But the thing that makes agentic development powerful is the same thing that makes vibe coding dangerous: the AI multiplies what you bring to it. Strong fundamentals, multiplied by AI leverage, produce remarkable output. Shallow understanding, multiplied by the same leverage, produces the same shallow application - just faster and with more confidence than it deserves.

Knowing what XSS is, understanding why N+1 queries kill performance, recognizing what proper input sanitization looks like - these aren't gatekeeping credentials for an exclusive club. They're the baseline competencies that determine whether AI assistance makes you more effective or just more productive at building the wrong thing.

The AI will generate the code. You still have to know what good looks like.